Weekly briefing · Inference Radar

The state of open-source

inference, every week.

Automated, citation-backed briefings on the repositories that actually move the AI inference stack — vLLM, llama.cpp, MLX, TensorRT-LLM, and 130+ more. Produced by Inference Radar, our research arm for tracking the open-source inference ecosystem.

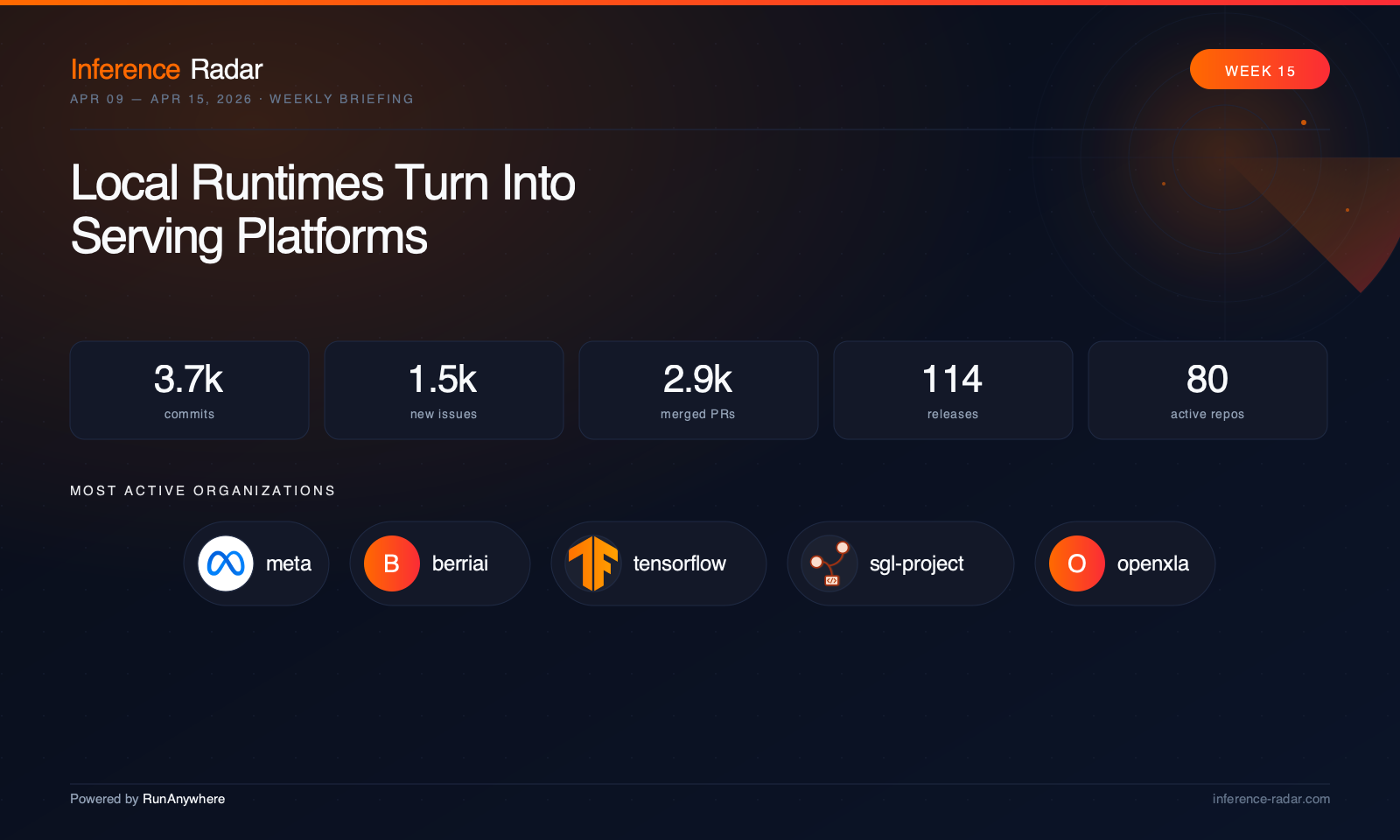

Latest issue

Read full briefing

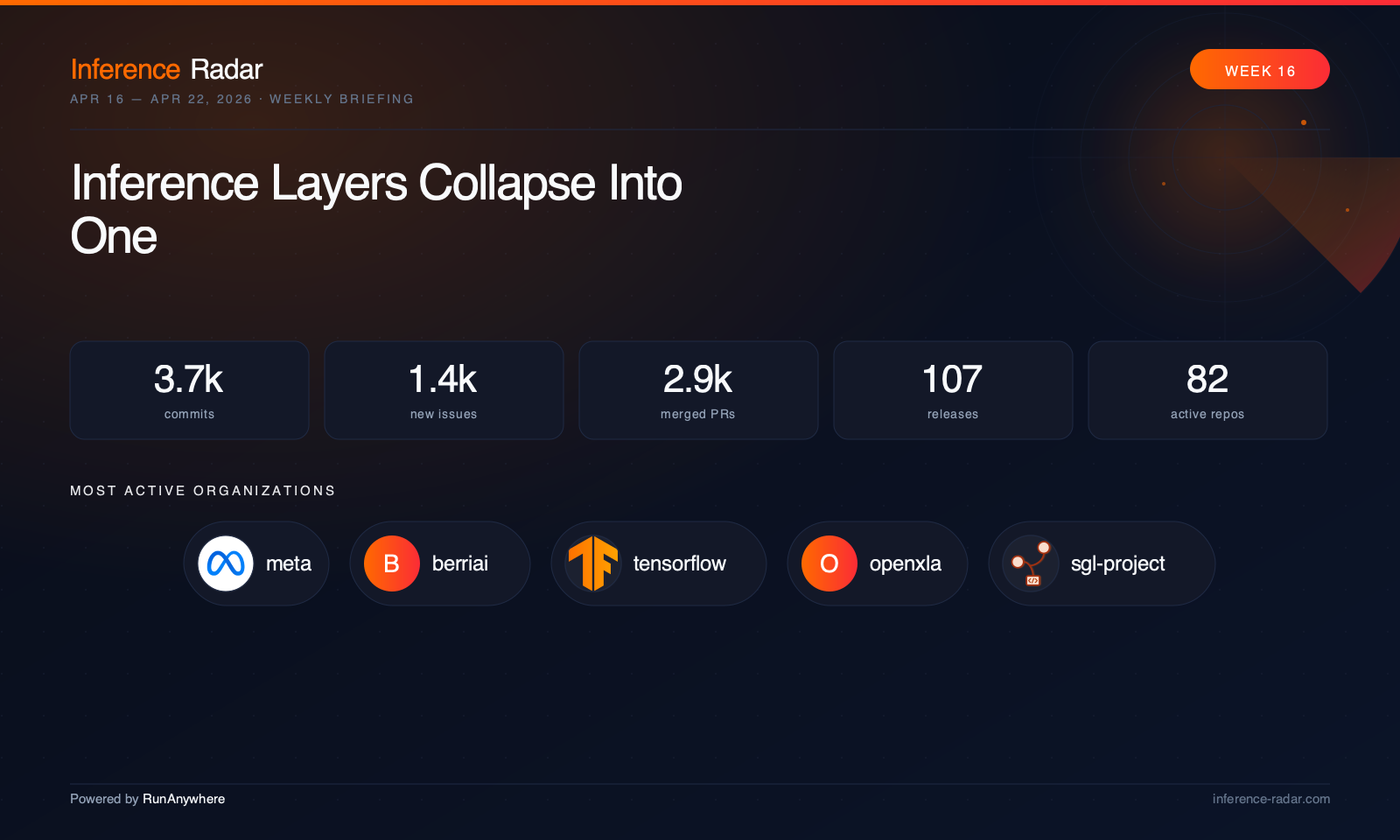

Inference Layers Collapse Into One

“This week’s code tells a clear story: cloud servers, laptop runtimes, mobile frameworks, and compiler backends are converging on the same problems — KV cache pressure, tool-calling correctness, multimodal support, and hardware-specific execution paths. The old boundaries between “datacenter inference” and “local AI” are fading; what matters now is how fast each project can move fixes and optimizations across the whole stack.”

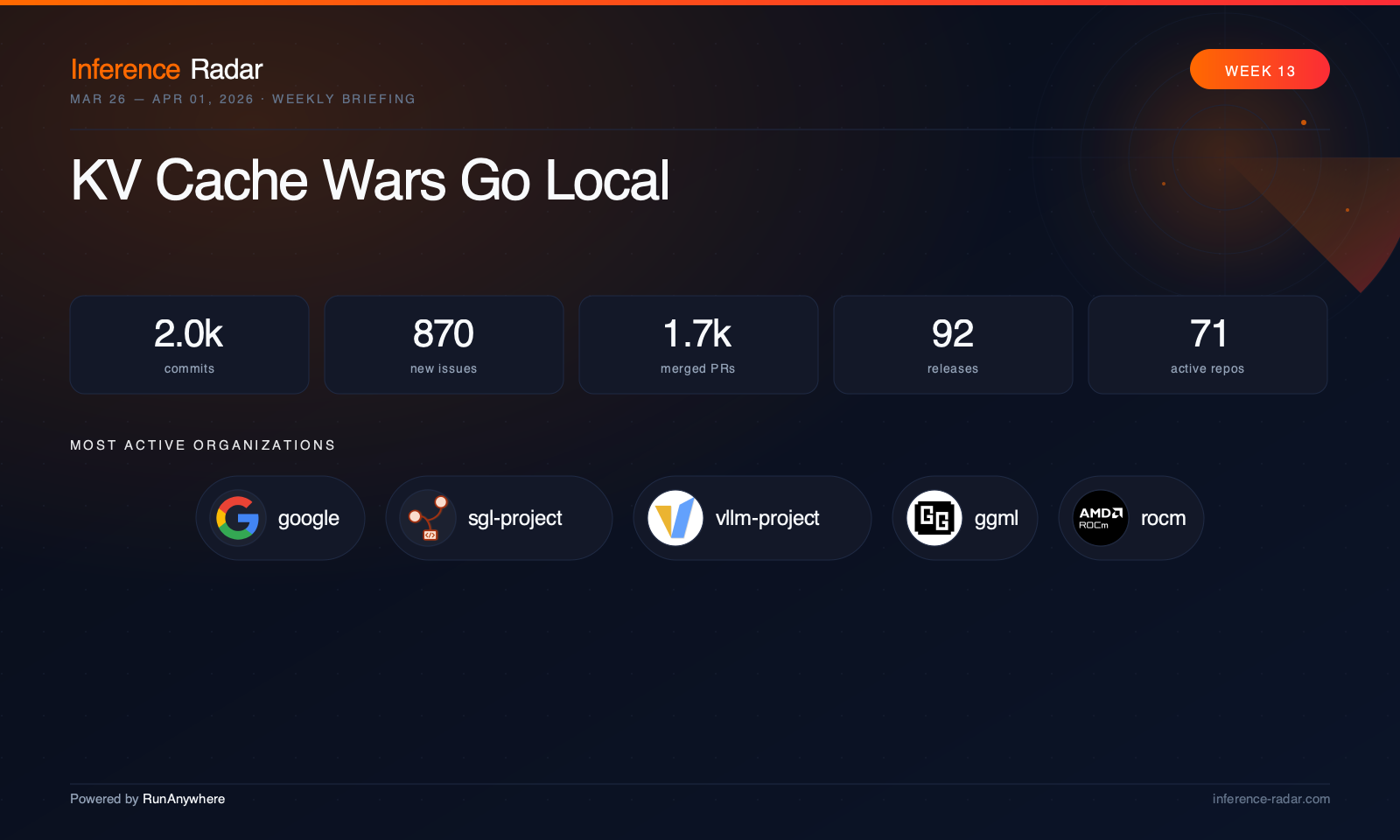

Archive

Every issue we've published.

Powered by RunAnywhere

The signal,

not the noise.

A weekly briefing for engineers who ship inference infrastructure for a living. Every link is cited. Every claim is grounded in code.