Making every device an AI-native device.

We research and build inference engines from the metal up - custom kernels, operator fusion, unified memory optimization. For the hardware you already own.

token output stream

0

tok/s peak decode

0x

faster than Apple MLX

0+

GitHub stars

0

platforms shipped

The problem

Most AI runs in the cloud. That won't scale.

We study inference at the hardware level. Here's what we've found.

Cost

Cloud inference costs $0.08–0.35 per minute for voice alone. Serving AI to 8 billion people through centralized GPU clusters is economically impossible. The compute has to move to the edge.

$0

marginal inference cost on-device

Latency

A round-trip to the cloud takes 300-400ms minimum. For real-time voice, vision, and autonomous systems, that’s too slow. Physics sets the floor - on-device removes it.

<7ms

time-to-first-token (Qwen3-0.6B, M4 Max)

The models are ready

Small models now match the quality of models 250x their size. The bottleneck isn’t the model - it’s the runtime. That’s what we build.

668

tok/s on a single MacBook

New Research

Read our latest publications

On-device intelligence - fast, private, hardware-native. Applied research for hardware-native AI inference.

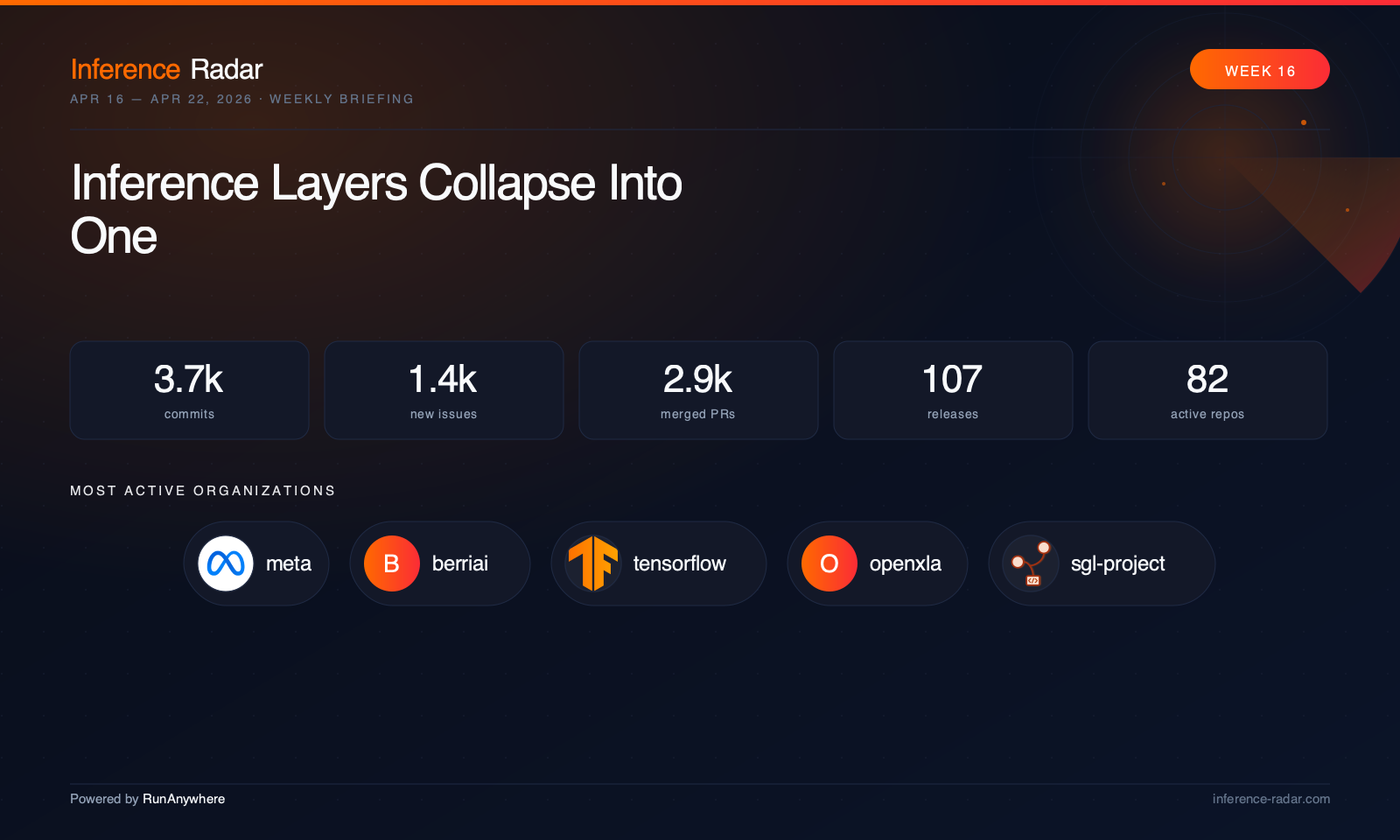

View all publicationsWeekly Briefing · Inference Radar

The state of inference, weekly.

View all issues (4)What We Build

Engines. SDKs. Observability.

Three layers that take on-device AI from research to production.

Inference Engines

01

MetalRT

Custom kernel runtime for the hardware you already own. 658 tok/s LLM decode, 101ms speech-to-text, 287 tok/s vision. Every kernel hand-written from scratch.

Developer SDKs

02

Cross-Platform

Swift, Kotlin, React Native, Flutter. One API across iOS, Android, and edge. Ship on-device AI with a few lines of code - LLM, STT, TTS, vision, voice agents.

Observability

03

Control Plane

Fleet dashboard, OTA model updates, policy-based routing, inference analytics. Manage thousands of devices without app store releases.

Our approach

We build from the metal up.

Custom GPU kernels, operator fusion, unified memory optimization. Our benchmarks speak for themselves: 668 tok/s LLM decode, 287 tok/s vision inference on a single MacBook.

We write GPU kernels from scratch — hand-designed memory layouts, fused operators, and custom Metal shaders that bypass every generic abstraction layer. MetalRT achieves 668 tok/s LLM decode on Apple Silicon. Every kernel targets the specific hardware it runs on.

The shift from cloud to edge will be defined by whoever builds the best runtime. We publish our research openly, ship production SDKs across Swift, Kotlin, React Native, and Flutter, and make the engine available on GitHub.

Backed by Y Combinator, we are building the infrastructure layer for on-device AI at scale — starting with Apple Silicon, then Qualcomm, then Intel. We utilize the hardware people already own.

Inference Stack

iOS · macOS · Android

RunAnywhere.load("llama-3.2-1b")

Swift · Kotlin · React Native · Flutter

Cross-platform bindings → C++ core

C++ Inference Engine · Quantized Weights · KV Cache

Orchestrates graph execution on unified memory

Hand-written Metal Shading Language

We write every GPU kernel from scratch

M1 · M2 · M3 · M4 · Unified Memory · 800 GB/s

simd_sum · threadgroup_barrier · [[buffer(0)]]

Team

“We left AWS and Intuit to write custom kernels by hand. Because the future of AI isn't in the cloud - it's on every device you already own.”

Founders

Built SDKs used by 50M+ users at Intuit. Leads product, go-to-market, and the vision for making every device AI-native.

Choose your seat

GPU Kernel Engineer

Remote

ML Inference Researcher

Remote

Mobile SDK Engineer

Remote

If you're interested in joining contact founders@runanywhere.ai

In the press

Latest news.

What the industry is saying about RunAnywhere and on-device AI.